Entropy units9/10/2023

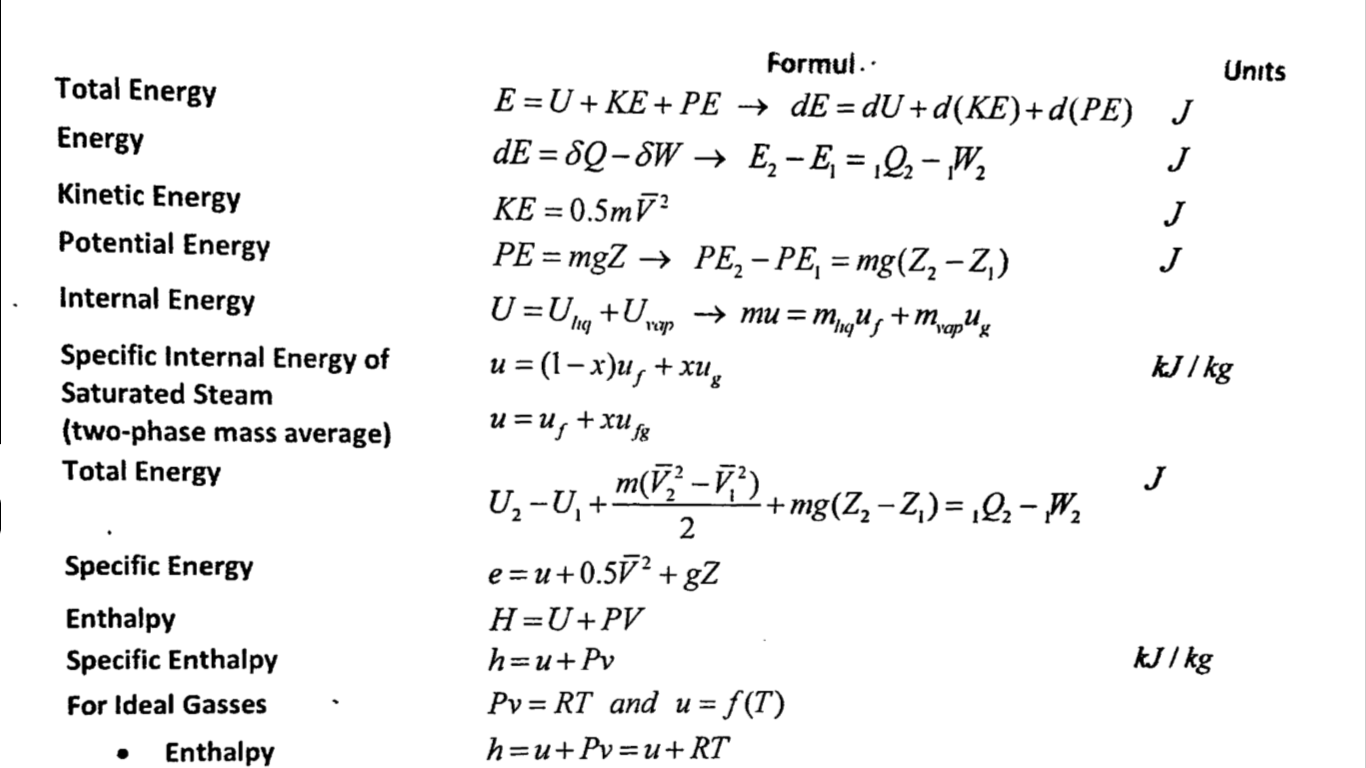

Where S is entropy, kB is Boltzmann’s constant, ln is the natural logarithm, and W represents the number of possible states. If each configuration is equally probable, then the entropy is the natural logarithm of the number of configurations, multiplied by Boltzmann’s constant: In a system that can be described by variables, those variables may assume a certain number of configurations. In a more general sense, entropy is a measure of probability and the molecular disorder of a macroscopic system. In any reversible thermodynamic process, it can be represented in calculus as the integral from a process’s initial state to its final state of dQ/T. Measurement: In an isothermal process, the change in entropy (delta-S) is the change in heat (Q) divided by the absolute temperature (T): The SI units of entropy are J/K (joules/degrees Kelvin). Where T is the temperature of the process involving ΔH, amount of enthalpy change, at constant pressure.Įntropy is considered to be an extensive property of matter that is expressed in terms of energy divided by temperature. Since ΔH is the heat absorbed (or) evolved in the process at constant T and pressure P.ΔS is also calculated Viii) Entropy change is related to enthalpy change as follows: At absolute 0 (0 K), all atomic motion ceases and the disorder in a substance is zero.Ĭgs units of entropy are cal.K-1 denoted as eu. The temperature in this equation must be measured on the absolute, or Kelvin temperature scale. Since entropy also depends on the quantity of the substance, the unit of entropy is calories per degree per mole (or) eu. The entropy is expressed as calories per degree which are referred to as the entropy units (eu). The dimension of entropy is energy in terms of heat X temperature-1.

, 8 (1988) pp.Units of entropy: Entropy is defined as the quantitative measure of disorder or randomness in a system. Wojtkowski, "Measure theoretic entropy of the system of hard spheres" Ergod. Walters, "An introduction to ergodic theory", Springer (1982) For several useful recent references concerning the computation of entropy, see. 288–300Įntropy the term sequence entropy is used in the English literature. Ruelle, "Mean entropy of states in classical statistical mechanics" Comm. Mañé, "A proof of Pesin's formula" Ergod. Pesin, "Characteristic Lyapunov exponents, and smooth ergodic theory" Russian Math. Millionshchikov, "A formula for the entropy of smooth dynamical systems" Differential Eq. Sucheston, "On convergence of information in spaces with infinite invariant measure" Z. Ionesco-Tulcea, "Contributions to information theory for abstract alphabets" Arkiv for Mat. Pitskeĺ, "Nonuniform distribution of entropy for processes with a countable set of states" Probl. Chung, "A note on the ergodic theorem of information theory" Ann. Breiman, "Correction to "The individual ergodic theorem of information theory" " Ann.

Breiman, "The individual ergodic theorem of information theory" Ann. Kushnirenko, "Metric invariants of entropy type" Russian Math. Krengel, "Entropy of conservative transformations" Z. Kieffer, "A generalized Shannon–McMillan theorem for the action of an amenable group on a probability space" Ann. Safonov, "Information parts in groups" Math. Billingsley, "Ergodic theory and information", Wiley (1965)Ī.V. Rokhlin, "Lectures on the entropy theory of transformations with invariant measure" Russian Math. Sinai, "On the notion of entropy of dynamical systems" Dokl. Kolmogorov, "On entropy per unit time as a metric invariant of automorphisms" Dokl. Kolmogorov, "A new metric invariant of transitive dynamical systems, and Lebesgue space automorphisms" Dokl. Topological dynamical system) new concepts such as "Gibbsian measures", the "topological pressure" (an analogue to the free energy) and the "variational principle" for the latter (see the references to $ Y $-Ī.N. The analogy with statistical physics was one of the stimuli for introducing in ergodic theory (even in a not-purely metric context and for topological dynamical systems, cf. The name "entropy" is explained by the analogy between the entropy of dynamical systems and that in information theory and statistical physics, right up to the fact that in certain examples these entropies are the same (see, for example,, ).

)įor smooth dynamical systems with a smooth invariant measure a connection has been established between the entropy and the Lyapunov characteristic exponent of the equations in variations (see – ). Has been proved for a certain general class of transformation groups. See Metric isomorphism) of a Lebesgue space $ ( X, \mu ) $.įor any finite measurable decomposition (measurable partition) $ \xi $ Basic is the concept of the entropy $ h ( S) $ One of the most important invariants in ergodic theory.

0 Comments

Leave a Reply.AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed